While the technology isn’t perfect for anyone, it seems to be a lot further from perfect for some people compared to others.

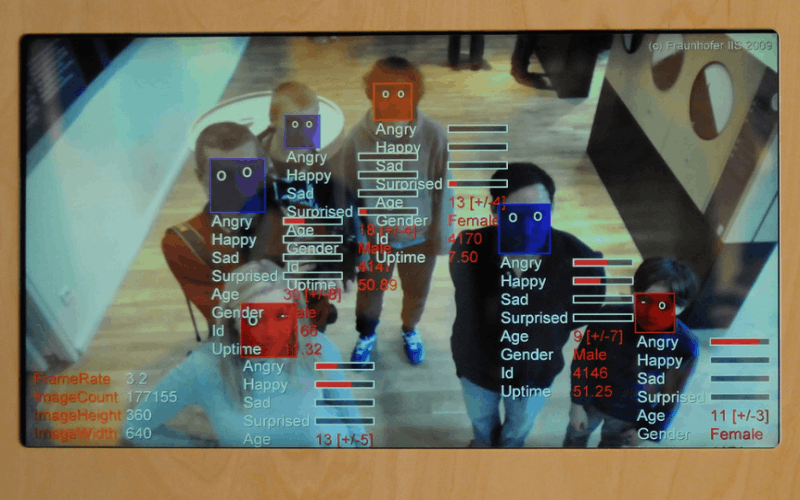

According to a recent research project by the Massachusetts Institute of Technology, it may be over 40 times less perfect. The study shows that modern facial recognition software averages a 0.8% error rate for determining the sex of lighter skinned women, as opposed to a 34% error rate for determining the sex of darker skinned women. The technology experienced its all-time lowest rate of error when working with light-skinned males.

The aforementioned experiment had a team of researchers, led by Joy Buolamwini, a researcher in the MIT Media Lab’s Civic Media group, gather a collection of 1,200 images of faces in a spectrum of race and skin tone. They then subjected those faces to three high-profile facial recognition systems from large tech companies. One of the systems promised an accuracy of 97%, but the researchers found that the sample group used by the company to generate this statistic were over four-fifths white and three-quarters male. These were top-of-the-line programs, programs that are becoming more and more popular in modern technologies, and as their uses become more frequent, these race and sex-based recognition problems could become much more drastic.

“What’s really important here is the method and how that method applies to other applications,” says Buolamwini. “The same data-centric techniques that can be used to try to determine somebody’s gender are also used to identify a person when you’re looking for a criminal suspect or to unlock your phone.” Meanwhile, corporate giants are trying to up their use of facial recognition software in an attempt to bring accuracy and automation to many of their services. Buolamwini hopes that her and her team’s research will help raise awareness of this issue and force companies to slow their advances before basing so much of modern technology on a software that has only a two-thirds success rate with certain individuals.

Buolamwini wonders whether tech developers of the facial recognition programs knew of the rate of error in darker-skinned people but decided to push the technology through anyway. “To fail on one in three, in a commercial system, on something that’s been reduced to a binary classification task, you have to ask, would that have been permitted if those failure rates were in a different subgroup?” She also warns about the dangers of relying on benchmarks for “the standards by which we measure success”, as the 97% benchmark measured above was clearly designed to produce a fake statistic that would lead to an incorrect assumption about the accuracy of the product.

But this isn’t the first blunder in the market of facial recognition software’s ability to recognize nonwhite individuals, and it certainly isn’t the most embarrassing: Three years ago, Google’s photo app mistakenly tagged black people as gorillas, which caused much more buzz than the findings from this small team of researchers. Wall Street Journal’s article says that this event shows “the limits of algorithms”, which, when racial recognition is confirmed, is somewhat accurate.

But much to her dismay, Buolamwini is working against a quickly ticking clock, as facial recognition software is already being used to pick out people in crowds and match them with criminal offenses: An article on The Guardian featured a recent story in which an undercover investigator met with a drug dealer, and was able to snap several pictures of the dealer’s face on his phone while he held it up to his ear and pretended to take a call. Going off of the images, the police used facial recognition software to locate a suspect, convict him, and sentence him to jail. But unless these officers used a different software than the ones shown above, this study suggests that the conviction and any others like it have a one-in-three chance of being inaccurate.