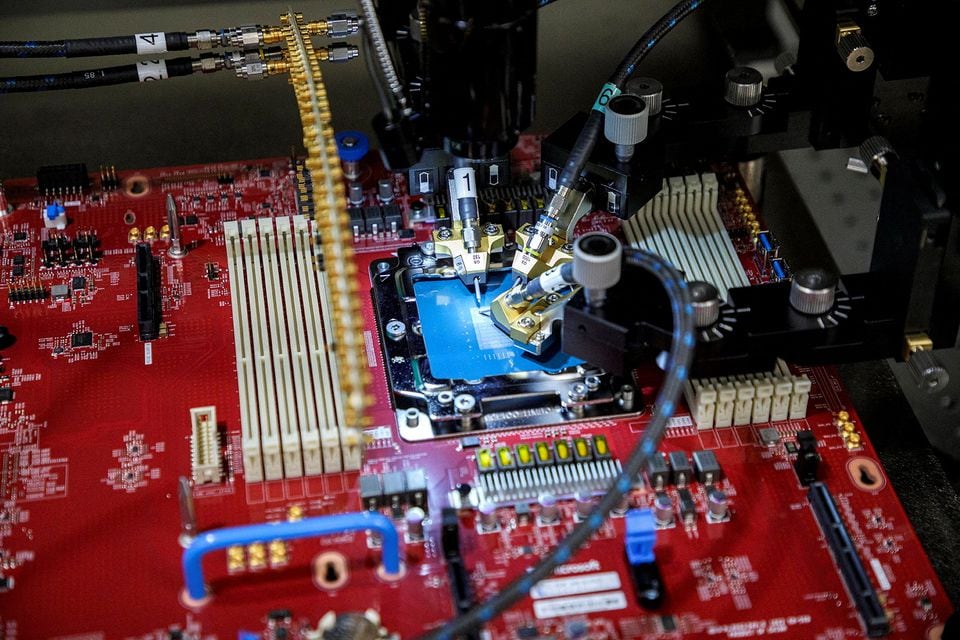

Microsoft (MSFT.O) revealed two specially designed processing processors on Wednesday. With this move, the company joins other large tech companies that bring critical technology in-house due to the high cost of providing artificial intelligence services.

According to Microsoft, the chips will not be sold; they will be used to power the company’s subscription software products and the Azure cloud computing service.

Microsoft unveiled the Maia chip at its Ignite developer conference in Seattle. The chip aims to accelerate AI computing operations and serve as the basis for the company’s $30/month “Copilot” service for corporate software customers and developers who wish to create custom AI services.

Large language models, a class of AI software that powers Microsoft’s Azure OpenAI service and is the result of Microsoft’s partnership with OpenAI, the company that created ChatGPT, were intended to operate on the Maia processor.

Alphabet (GOOGL.O) and Microsoft, among other IT behemoths, are struggling to absorb the tenfold increase in costs associated with providing AI services compared to more conventional offerings like search engines.

According to Microsoft officials, the firm intends to save costs by utilizing a shared set of basic AI models for its extensive efforts to integrate AI into its products. They claimed that the Maia chip is tailored for that kind of task.

“We believe that this offers us a means to offer our clients better solutions that are quicker, less expensive, and of higher quality,” stated Scott Guthrie, executive vice president of Microsoft’s cloud and artificial intelligence division.

According to Microsoft, the most recent flagship CPUs from Advanced Micro Devices (AMD.O.) and Nvidia (NVDA.O.) will power cloud services for Azure users starting in the upcoming year. Microsoft announced that it is testing AMD CPUs with GPT 4, the most sophisticated model from OpenAI.

Ben Bajarin, the CEO of an analysis company, Creative Strategies, claimed that this was not replacing Nvidia.

According to him, Microsoft can offer AI services in the cloud using the Maia chip until smartphones and personal computers can handle them.

“Microsoft has a very different kind of core opportunity here because they’re making a lot of money per user for the services,” Bajarin stated.

Microsoft’s second chip, unveiled on Tuesday, aims to address Amazon Web Services, the company’s leading cloud competition while cutting costs internally.

The new chip, Cobalt, is a central processing unit (CPU) using Arm Holdings (O9Ty.F.) technology. Microsoft said Wednesday that Cobalt, the business messaging app, has already been tested.

However, Guthrie of Microsoft stated that to rival Amazon Web Services’ (AMZN.O.) “Graviton” line of proprietary processors, his business also hopes to sell direct access to Cobalt.

“We are designing our Cobalt solution to ensure that we are very competitive both in terms of performance as well as price-to-performance (compared with Amazon’s chips),” Guthrie stated.

Later this month, AWS will have its developer conference. According to a spokeswoman, the company has 50,000 users of its Graviton processor.

The spokesperson stated, “AWS will continue to innovate to deliver future generations of AWS-designed chips to deliver even better price-performance for whatever customer workloads require,” after Microsoft announced its chip.

Microsoft provided minimal technical information that would enable comparing the chips’ competitiveness to that of other established chip manufacturers. According to Rani Borkar, corporate vice president for Azure hardware systems and infrastructure, Taiwan Semiconductor Manufacturing Co. (2330. TW) provides the 5-nanometer manufacturing technology used in both designs.

He clarified that standard Ethernet network cabling would connect the Maia processor instead of the more costly Nvidia networking technology that Microsoft employed in the supercomputers it constructed for OpenAI. Borkar told Reuters, “You will see us going a lot more the standardization route.”