So What Is A Deepfake?

(If you already know the answer to this question, skip to the next section)

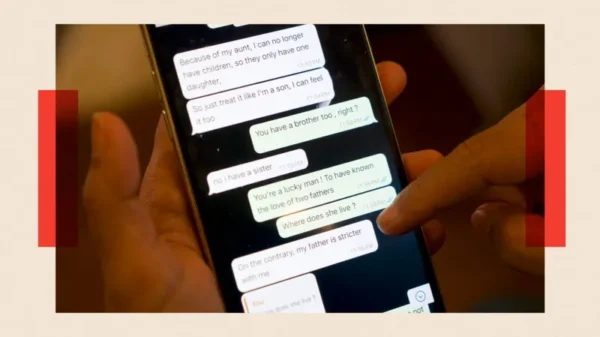

Deepfakes have been a fairly underground technology for some time. The basis of the technology involves porting the facial expressions of one person onto the face of another person using some kind of computer program. For example, I could spend ten minutes talking to myself in front of a camera, run the program through a deepfakes system, and replace my face with the face of say, Donald Trump.

And boom. With surprising accuracy, I am left with a video of what looks very much like Donald Trump saying a bunch of things that Donald Trump has probably never said before. Of course, my voice still doesn’t sound like Trump’s, but seeing as just about every American over the age of 15 has some kind of Donald Trump impression, it wouldn’t be too hard to find one or two that could be passed off as the real thing.

Obviously, then, Deepfakes could be very powerful in creating fake news. When I last wrote on the topic about a month ago, I stated that it has two primary applications:

The first serves against those being faked: Normal people with solid voice impressions could use the technology to create very convincing fake videos of important people doing incriminating things, and pass them off as real with relative ease.

The second serves for those being faked, or for those famous enough to theoretically be faked: If they were ever caught on camera doing or saying something incriminating, they could pass it off as a fake to escape the negative effects.

So What’s Changed?

Deepfakes have gone professional. For those who never knew or don’t remember, the original “Deepfakes” program was created by a single person and released as an android app. Their original purpose was to allow individuals to easily put celebrity faces over clips of pornstars, making easy, fake celebrity pornography.

And since the technology began to be recognized as being applicable for much more than pornography, a team of professionals have decided to make the algorithms much, much better.

According to an article on ScienceNews, the company SIGGRAPH will be holding a conference tomorrow, August 16th, to show off this new system. The company’s page claims that the technology will allow for “full control over a target actor by transferring head pose, facial expressions, and eye motion with a high level of photorealism.”

From the article on ScienceNews:

“Computer scientist Christian Theobalt of the Max Planck Institute for Informatics in Saarbrücken, Germany, and colleagues tested their program on 135 volunteers, who watched five-second clips of real and forged videos and reported whether they thought each clip was authentic. The dummy videos fooled, on average, 50 percent of viewers.”

And while 50 percent alone may seem like a high number, the real number of those who would have been fooled is probably much higher: The subjects taking part in this experiment were told that some of the clips were going to be fake, so they scrutinized and analyzed them to a much larger degree than ordinary people would have upon seeing a video clip. This intensified level of scrutiny caused the individuals to believe that 20 percent of the totally normal, real clips they were shown were also fakes.

For better or for worse, SIGGRAPH has created a Deepfakes-style program with capabilities far past that of what has been seen in the past.

But this program certainly isn’t intended to be used for fake news, and the full software will probably be too costly for full fake-news writers to afford. More than likely, its intended application is for professional and amateur filmmakers to use the swapping feature for quick – and – easy facial MoCap processes on real people (Young Princess Leah at the end of Rogue One: A Star Wars Story) or fake, giant purple people (Thanos).

Still, it’s hard to deny this program’s potential for some seriously convincing fake video clips. And even if the entire software won’t be released for prices news fakers can afford, the advancements from the program may leak into other programs down the line.

When I first wrote about Deepfakes, I mentioned some other programs in development to detect and counter them. I haven’t heard anything from those programs in some time, but let’s hope that they get up and running soon.

Alyssa

August 16, 2018 at 4:12 pm

Although this program is cool, it can do so much harm and should be shut down

Maya Asregadoo

August 21, 2018 at 3:17 am

I agree that this program is more harmful than helpful.